OK I admit it! This title is a bit presumptuous, I should have named it something like “My path towards data analysis with python”, but temptation was too great; I had to have a clickbait title for this one… and here you are 😉 .

In my company, there are proper data scientists and we have nothing in common, except for the email address domain 😀 . I am far too realistic to consider my self as a data scientist, but this is more or less the path I am following since some years and I would like to share my view on this.

I was discussing with other python enthusiasts lately. They have the same profile than I and more or less the same goal : Doing advanced analysis with python. However, we found it difficult to clearly define how you should approach your learning path towards this field.

I am a big evangelist about the limitless possibility of your capabilities. I strongly believe that every one can do every thing, with proper dedication and access to the knowledge.

2 motto drove my life / career:

- There is always someone better than you.

- You are not less capable than anyone else.

Therefore I am (always) encouraging everyone to keep learning, at any age and if you like something, it is never too late to learn it. This blog is to show to other digital analysts what is possible to do with python and JavaScript, mostly for Adobe Analytics.

Life Without Background

As said previously, I was discussing with other pythonist and we have the same background. Economics or Marketing backgrounds and trying to realize advanced analysis with programming languages. From this discussion, I felt that without proper Computer Science degree, it was pretty hard to decide on how to improve your data analysis skills.

This field is so vast and there are so many things to learn. You can feel lost and because some companies are not really good at knowing what they want, they give the impression that they are looking for people that are mastering everything.

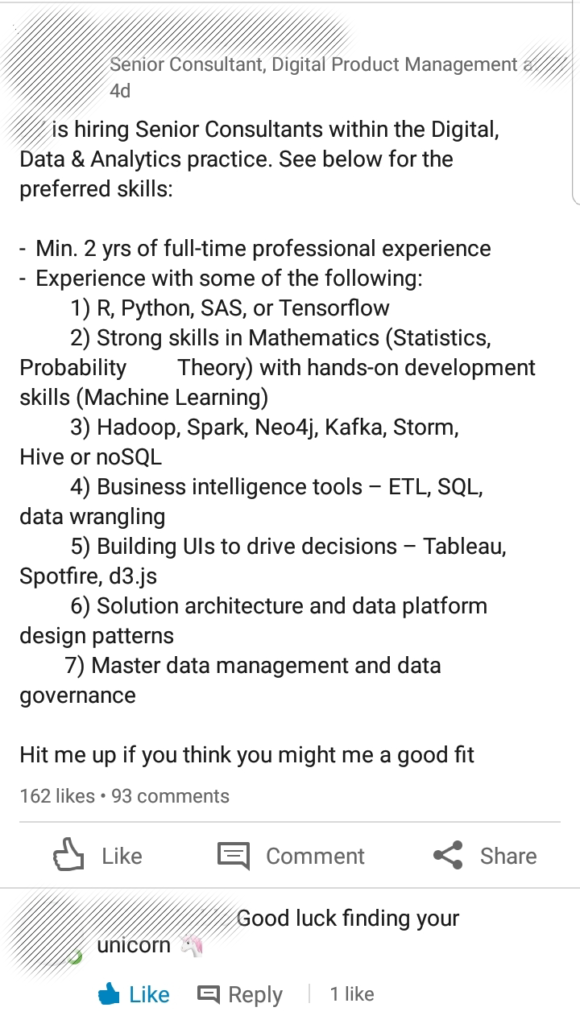

I saw a post lately on LinkedIn and it made me laugh and cry at the same time :

This kind of position offer is bad on so many levels and should be seen as big red flag about this company but let no develop further on this.

The rest of this post will try to give my stake on this subject. What to prioritize in your learning and why did I chose this order. I don’t think I hold the universal truth on this matter but this has worked for me so far. This comes from my personal experience and capability, therefore it may not apply to people with different background and capabilities.

I hope you’ll find some helpful tips there and avoid the mistake I made on my journey. I tried to get it to sum up this journey in 10 steps, let me know if I missed any important one.

Step 1 : Getting an expertise

For the people not coming from Computer Science degree, with specialty on math or statistic, you are probably working on the business side and therefore being quite far away from using code in your daily work. And that is OK !

Because the first step that you should achieve before running to Data Science is to develop a level of expertise in a certain area, several if possible (I recommend that). As said in multiple articles, I was not getting to data science out of my university years. I wandered in different fields (SEO / Testing / Web Analytics) in order to find one that I like. This journey enable me to develop some expertise in different area :

- Online Marketing : Channel management, attribution models, etc…

- Website optimization : Performance optimization, UX testing, Quantitative testing, etc…

- Web Analytics : Javascript tracking, GA, Adobe Analytics, Tag Management, etc…

- Ad Network : Real Time Bidding, Retargeting logic, id sync

I am far from being excellent in any of these but I know enough to be able to realize analysis and understand the business questions for them. Some people are surprised by my journey and think that this is a weakness as I am not THE expert in any domain. I find it the other way around, I can discuss with very different type of people and understand their topics, even if I am not aware of the last Adwords release that enable sub link tracking parameter, or something like that.

This step takes several years but it is worth it. It is much easier to find an interesting position as Data Analyst when you have already a field you master.

Step 2 : Learn the basic of a language

Once you have a certain expertise, you can consider picking a language. In my case Python was my choice but it is not a must. There are a lot of development in the Data Analysis field. C, Java, R, Rust, Julia, even JavaScript has some data science library. Take the one that feels the most natural to you or that make the most sense in your domain.

If you have an expertise in the Software industry, there are high chance that your industry is using C, so it may be a good idea to look at that. If you are working in an industry that requires very fast computing programs (in order of milliseconds) and wants to be on the edge of what is done, Julia might be for you.

This steps is very long and should not be overlooked. I am not recommending the approach : “I will learn it while also learning the data science course”. On the data science classes, they expect you to know what a Dictionary, a JSON format or an Array is. You will have enough to learn with specific math and stats concept, don’t add up programming language on top.

Step 3 : Master Pandas, discover Numpy

Starting this step, I will be focus on Python, as this is the one I chose to develop.

Pandas is the number one library that you should master when you are a data analyst. Basic functionality should be like second language to you. It is very important that you spend time to try things on this, you realize data cleaning and data wrangling and see which techniques are actually working the best for your problems.

Numpy is also a good library to learn. Personally I am not using it extensively as my applications don’t require to be time-computing optimized. But I am using it time to time as some functionalities work good with pandas data frames.

Pandas also enable some easy visualization and this is the subject of my next step.

Step 4 : Data Vizualization

The previous steps were pretty obvious if you know the python universe but once you are arrived at mastering Pandas, you’ll need to look at the next step directly. This step and the 2 following ones could be switched as they are quite independent from each other. It depends on your priority.

As the next step, you can start doing some pandas visualization. As you are going to dig into data and do analysis, it can be quite tough to explain your findings to your colleagues.

Visualization is the under-the-radar field in most data analyst vision. Yet it is one of the most powerful and impacting field you can learn. Everyone struggles to understand lots of data in table. Visualization can make your findings easier to understand and more impacting to your audience.

This is why I would recommend you to learn it as quick as possible. At least the basic with the pandas possibilities. It already covers good basics.

When you are more comfortable with those, you can dig deeper with seaborn, bokeh or dash libraries.

Step 5 : Improve your Python beyond the basics

One of the error I did is that I tried to jump too fast into data science without proper knowledge of python beforehand. Of course, I knew list, loops, dictionaries, etc… However, in order to go further into data analysis, basics are not enough. In a world of optimization, you bet that half of the example are using middle to advanced techniques. Going further without knowing what is a generator or comprehension list is going to be difficult.

I personally felt that my knowledge was not up to the task and found it hard to bridge the gap between basic and intermediate to advanced python. To realize this task, I invested in a book (Fluent Python). I was really happy with it but others may exist to bring you to that level.

Step 6 : Realize one important Project

On your journey to master python data analysis, you can learn as much as you want, if you don’t apply the things you learned, there are good chance that you will have hard time to remember it. It is not a problem, as long as you remember that it is possible, you will be able to find this method back but it can be frustrating and it is definitely not efficient.

Creating a module, or at least a medium size application for your own purpose or for any other reason is the best way to use all of the techniques that you have learned.

“Übung macht den Meister !” : practice makes the master.

And the best way to practice is to actually use the things you learned in real life application. In my case, I have lots of opportunity to test everything in the different API I am creating but it could be anything.

I know someone that tries to create palindrome with Python, another trying to automate his web analytics implementation with Selenium…

Python enables you to be very creative on this as it is very versatile. Enjoy this possibility.

Step 7 : Check your stat and math level

This is not a mandatory step and it will be less and less relevant in the future as most of the complexity will be hidden behind framework. However, I feel it doesn’t cost too much to get back to the basics of vector and matrices in order to understand the basic concept lying behind more advanced algorithms.

On that note, I can only recommend the Khan academy and the youtube channel 3Blue1Brown. Those resources are fantastic to get to a correct level on math and statistics.

Here I would recommend you to not go down the rabbit hole and try to understand the very equation that are lying behind each algorithm but what are the specificity of the algorithms. (Gradient Descent vs Stochastic Gradient Descent, Decision Tree vs Support Vector Machine, etc…)

As said earlier, most of the complexities of these algorithms will disappear for most of the data scientists, only researcher will probably continue to fine tune these algorithm in the future. You have to trust these guys.

Step 8 : Machine Learning with SciKit Learn & XG Boost

“Why should I care with SciKit Learn library ? it is Neuronal Network that I want to learn ! “

This is the thinking of over enthusiast people when they are finally reaching the step to data science. I am not into Neuronal Network yet, so I may change my judgement later on, but what I see in the field proves me that learning “basic” optimization algorithm is still a good use of your time. This for several reasons :

- Basic Algorithm are cheap to use : They don’t require powerful computing power in order to run

- They can be explained : We know how the algorithm works and steps are going to happen in the process.

- Their results can be understood : the process that lead to that result can be reversed engineered.

Because of these reasons, I feel that the SciKit is still going to be there for a while in the data science world. Also, it would be easier for you to apply.

Another library that is worth trying is the XG boost library, it is less known because more specialized in Gradient descent, but it is on my list of learning of a data science journey. You’ll probably hear about it if you join a Hackathon at some point. It is pretty powerful.

Step 9 : Deep Learning with TensorFlow, pyTorch & Keras

I didn’t reach that far into my list so this is wishful thinking at the moment but getting to deep learning is probably the next step you want to realize, once you have done all the previous ones… and if you still have energy to learn further.

Technically Tensorflow can also do basic machine learning capabilities but it is more associated with the Neuronal Network. However, in order to really take advantages of those libraries, it would be recommended to have a computer with Graphic Card capacity, most probably with Nvidia so you can take advantage of the CUDA architecture.

ARM and Intel are working into changing their architecture to match this “vectorization” trend we see in computing but the Nvidia graphic card have some lead over these new processors.

To be honest, the first thing I will learn on this step may not be tensorflow but pyTorch. The library developed by Facebook. I only heard good things about it and we know that tensorflow 2.0 is coming. So pytorch may be the right choice until then. Too bad for me that I already bought a book on tensorflow 1.0 … 🙁

Keras is the easy path for neuronal network usage as it comes on top of other library and create a high level interface. When we talk about high level interface, it means less possible customization. I am pretty sure that this is worth the learning as well as you don’t need a Ferrari to go to buy your baguette. 😉

Step 10 : Data Engineering (pySpark / MLib / Dist-keras)

This step is further away for me because I have no opportunity to actually work in that type of scale environment with distributed system. But if I have the opportunity, I’d love to actually learn how to realize computing operation on distributed system.

On this, I was very hyped when I was at the start of my journey but due to the complexity to set up an environment to learn and the limited application I can do with such knowledge, I let that go for the moment.

Don’t get me wrong, I don’t mean that you should not learn this before the end of your journey, it could be as early as the step 8. However, as I didn’t have the opportunity to actually work with it, and it is really hard to use it on your daily work, I gave it a pass for the moment.

If you know good way to learn this and can recommend good courses, feel free to drop a comment, it will be appreciated.

I hope that this article has helped you to see what to prioritize in your learning and gave you a nice overview of the whole field.

Good post – I can definitely see myself progressing currently somewhere between steps 4 and 5. The data science term in general is too much of a buzzword, as no single human being can master all topics the title entails.

Relevant post of how I sometimes feel about the whole topic: https://towardsdatascience.com/how-it-feels-to-learn-data-science-in-2019-6ee688498029

Nice article on medium.

I am happily leaving between step 6 and 7. Let’s see how far I go. The journey is pretty long and it is not like you have to reach it.

Developing other skills (Marketing, Management) or interests (Philosophie, Sports) is as important.

Can anyone please let me know What do I need to learn to become a data scientist?

I have led decision science teams for big companies and I get this question a lot. I answer it this way. Data science is the combination of domain expertise (like an industry focus), programming, and mathematics. While data science is an awesome field and can be very fulfilling, it can be as frustrating and disappointing as any other career choice, if it isn’t right for you. Many people seem to think about this as a way to get a good job quickly because demand is so high right now. This is not necessarily the case. I encourage people to ask themselves why they want to become a data scientist or any other career choice they may not know much about. Once you answer that for yourself, you can find an awesome career whether it is in data science, engineering, analytics, or something else all together. Here is a link to a “day in the life” post for data scientists who work in corporate settings, that may be helpful.

https://therandomvariable.com/what-does-a-data-scientist-really-do/